#BITNET

Learn how to install bitnet.cpp, download the BitNet b1.58 model, and run a fully local AI chat and inference server on your machine. This beginner guide shows you how to run tiny AI models without cloud dependencies. https://www.kdnuggets.com/run-tiny-ai-models-locally-using-bitnet-a-beginner-guide #AIagent #AI #GenAI #AIInfrastructure #BitNet

Microsoft BitNet: 100B Param 1-Bit model for local CPUs

https://github.com/microsoft/BitNet

#HackerNews #Microsoft #BitNet #100BParam #1BitModel #LocalCPUs #MachineLearning #AI

🚀 Want to run BitNet-b1.58-2B-4T locally? The new setup_env.py script automates a CMake build of the C++ backend, turning Python-driven setup into a fast inference engine. Perfect for hobbyists and researchers eager to experiment with large AI models offline. Dive into the details and see how easy open-source deployment can be! #BitNet #Python #CMake #LocalInference

🔗 https://aidailypost.com/news/python-setupenvpy-builds-bitnet-b158-2b-4t-c-backend-via-cmake

Tạo động cơ LLM 1.58-bit chạy 117 token/giây trên 1 nhân CPU với Rust và AVX-512, nhưng bị lỗi ở lớp Activation khiến đầu ra luôn là <unk>. Cần hỗ trợ về: (1) Weight tying trong BitNet – thiếu hệ số tỉ lệ? (2) Cách scale tích lũy nguyên từ VPOPCNTDQ trước khi đưa vào RMSNorm/SiLU. Dự án mã nguồn mở, zero-copy, không heap allocation. #Rust #AVX512 #LLM #MachineLearning #AI #R3Engine #BitNet #LocalAI #HPC #Inference #trítuệnhân tạo #môhìnhtonngẫu #xửlýsongsong #tinhoccao

https://www.reddit.

Chúng ta đang chuyển từ thời đại MatMul sang “AI cộng dồn” với BitNet (trọng số ternary), L‑Mul (thêm thay cho nhân) và mHC (đảm bảo ổn định quy mô). Nếu chạy mô hình 70B+ chỉ dùng 1/100 năng lượng, GPU hiện tại sẽ trở thành lạc hậu, cần ASIC chuyên cộng. Các bạn có nghĩ nên dừng mua GPU và tập trung vào kiến trúc cộng không? #AI #AdditiveAI #BitNet #L_Mul #mHC #CôngNghệ #TríTuệNhânTạo

Episódio 166 – 30 anos de Internet comercial no Brasil – Parte A

https://retropolis.com.br/2025/08/06/episodio-166-parte-a/

#Podcast #3270 #Alternex #ARPA #ARPANET #ASCIIArt #BITNET #BobKhan #CETEL #CGIBr #ControlData #DARPA #DEC #Embratel #enlace #FakeNews #FAPESP #Fermilab #GuerraFria #GuiaDaInternetBr #IBASE #IBM #internet #JosephLickner #Kremvax #LinhaCruzada #LNCC #Londres #MIT #modem #MTP #NCP #NICBr #PDP #rede #RedeRio #Registrobr #retrocomputing #retrogaming #Retrpolis #revista #RN

#LLM on a #Pentium2 with 128MB of ram? Yes. Up to 15M parameters, using #bitnet architecture, which uses ternary weights (-1, 0, 1) to reduce computational complexity.

#AI #retro #retrocomputing #LLM #llama

From: https://mastodon.social/@mindsConnected/114727256228518845

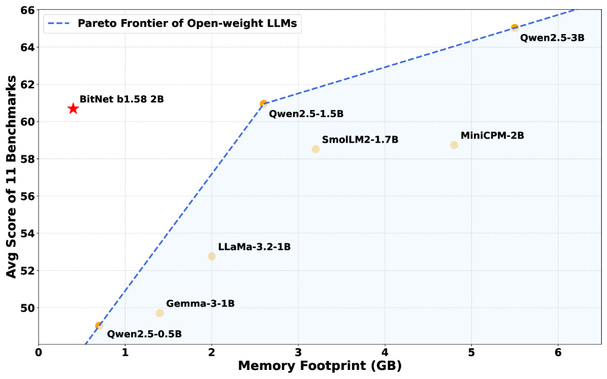

Their public availability allows for widespread experimentation and adaptation. However, a significant barrier hinders their broader adoption: the substantial computational resources required for deployment and inference. State-of-the-art open LLMs typically require large memory footprints, consume considerable energy, and exhibit notable inference latency, rendering them impractical for many edge devices, resource-constrained environments, and real-time applications. #bitnet

Weird thing is that I am on a martial arts mailing list (originally created to mock the newbie rec.martial-arts poseurs) that I have been on since 1987 and I am by far the youngest member of the group. I have no idea why they invited me, weird old croaks probably just wanted a youngster perspective. Everyone on there is still alive and kicking though - not very high kicking, but still!

🔬🤯 Modele 1-bitowe to rewolucja w AI! Wagi sieci neuronowej zapisujemy tylko 1 bitem – zamiast 32 czy 16. To nawet 16x mniejszy rozmiar i ogromne oszczędności energii, przy zachowaniu jakości klasycznych LLM. Przyszłość AI jest lekka! 🚀#AI #LLM #quantization #BitNet

Rassurez-vous : les auteurs ont tous été payés. ^^' #OhWait! #AI #BitNet #SpywareWithASmile ^^'

bitnet.cpp is the official inference framework for 1-bit LLMs (e.g., BitNet b1.58). It offers a suite of optimized kernels, that support fast and lossless inference of 1.58-bit models on CPU (with NPU and GPU support coming next).https://github.com/microsoft/BitNet #BitNet

The first release of bitnet.cpp is to support inference on CPUs. bitnet.cpp achieves speedups of 1.37x to 5.07x on ARM CPUs, with larger models experiencing greater performance gains. Additionally, it reduces energy consumption by 55.4% to 70.0%, further boosting overall efficiency. On x86 CPUs, speedups range from 2.37x to 6.17x with energy reductions between 71.9% to 82.2%. Furthermore, bitnet.cpp can run a 100B BitNet b1.58 model on a single CPU, achieving speeds comparable to human reading (5-7 tokens per second), significantly enhancing the potential for running LLMs on local devices.

1-Bit statt Milliarden Parameter: Microsofts BitNet b1.58 zeigt, dass KI auch ohne High-End-Hardware leistungsfähig sein kann. Ein radikaler Ansatz mit Potenzial für mehr Nachhaltigkeit und Zugänglichkeit. Ist das der Anfang vom Ende der GPU-Abhängigkeit? 👉 https://www.all-ai.de/news/top-news24/bitnet-microsofts-cpu-ki-fordert-die-gro%C3%9Fen-heraus #Microsoft #BitNet #KI

#Microsoft has introduced #BitNet b1.58 2B4T, the largest-scale 1-bit #AI model to date with 2 billion parameters and the ability to run efficiently on #CPU - It's openly available under an MIT license https://huggingface.co/microsoft/bitnet-b1.58-2B-4T

Microsoft Releases BitNet b1.58 2B4T, a 1.58-Bit AI Model That Runs on Standard CPUs

#AI #Microsoft #LLMs #BitNet #OpenSource #MachineLearning #CPU #DeepLearning #1bitLLM #GenAI

🎉🎊 Behold, the groundbreaking 587th iteration of #BitNet, where #buzzwords meet their ultimate destiny: "Technical Report"! A riveting tale of acronyms, citations, and a plea for #donations, all while you desperately try to figure out if those numbers actually mean anything 📊🤯. Remember, it's not a real tech report without a job ad and some grateful acknowledgments for funding! 💰👏

https://arxiv.org/abs/2504.12285 #TechnicalReport #TechNews #HackerNews #ngated

BitNet b1.58 2B4T Technical Report

https://arxiv.org/abs/2504.12285

#HackerNews #BitNet #b1.58 #2B4T #Technical #Report #arxiv #research #machinelearning #AI

Microsoft open-sourced BitNet b1.58, a big 2B param 1-bit AI. Super efficient on CPUs like M2, it rivals similar models using less memory/speed. Needs a custom framework for now, GPU support pending.

#AI #Microsoft #BitNet

Was looking at the source to a very early arXiv paper (https://arxiv.org/abs/hep-ph/9210243). The PDF is unavailable, for reasons that are obscure ("pre-1996 submission which cannot be processed"). But there's a lot of history in the source code: it looks like it was submitted, as a single file, emailed from BITNET to the arXiv via a gateway. It also uses a now-obscure TeX package phyzzx (https://ctan.org/tex-archive/obsolete/macros/phyzzx).

I know I'll sound like a young person when I say this but I'd love to know how that worked in practice and what it was like to be in academia before everyone had access to a TCP/IP internet connection but after internetworked computers were ubiquitous. Sort of like the TV series Halt and Catch Fire but with physicists.