🚀🚀 "Tech genius discovers the astonishing speed of #RPython GC allocation... by writing *another* benchmark program. Because, clearly, the world was waiting with bated breath for this groundbreaking revelation instead of solving actual problems. 🙄"

https://pypy.org/posts/2025/06/rpython-gc-allocation-speed.html #TechGenius #Benchmarking #GCAllocation #GroundbreakingRevelation #ProblemSolving #HackerNews #ngated

#benchmarking

We will participate in a variety of exciting events on the last day of #ISC25, Friday, June 13 – stop by & join the discussion https://go.fzj.de/isc25

Workshops, talks & tutorials on #EdgeAI🤖, #Benchmarking📏, #ParallelProgramming👩💻, #GPUProgramming, & the future of #Exascale⚡.

🗓️ Our #ISC25 events on Wednesday https://go.fzj.de/isc25

BoFs, Meet & Greets and Talks on #Exascale supercomputer JUPITER⚡ , #QuantumComputing⚛️ , #Benchmarking📏 , #Diversity & #Inclusion🏳️🌈 in HPC and the new Scientific and Innovation Case (SIC) study📚 . We also participate in the ISC Poster Sessions. 🖼️

ICYMI: Driving Organizational Transformation Through Business Process Reengineering in Consulting https://kamyarshah.com/driving-organizational-transformation-through-business-process-reengineering-in-consulting/ #Blog #Infographics #benchmarking #bprstrategy

🥱 Oh joy, another riveting post on #OpenBSD #IO #benchmarking that could cure #insomnia faster than counting sheep. 🙄 Spoiler alert: it’s about as exciting as watching paint dry, but with added #tables and graphs! 💻📈

https://rsadowski.de/posts/2025/fio_simple_benckmarking/ #boring #graphs #HackerNews #ngated

OpenBSD IO Benchmarking: How Many Jobs Are Worth It?

https://rsadowski.de/posts/2025/fio_simple_benckmarking/

#HackerNews #OpenBSD #IO #Benchmarking #Jobs #Worth #It #Benchmarking #OpenBSD #Performance #Testing

Making ChatGPT & Co usable for security-relevant fields of application

@CybAgBund launches research programme on #KI-#Benchmarking & model adaptation for security-critical applications: #HEGEMON:

Apply now: https://t1p.de/et2ro

#Cybersecurity

https://nachrichten.idw-online.de/2025/06/06/making-chatgpt-co-usable-for-security-domain-applications

🥳🎉 BREAKING: #Chrome now tests its #performance against benchmarks it helped design, and surprise surprise, it scores the highest! 😲🤯 Users can now save "millions of hours" - presumably to spend even more time figuring out how to disable auto-updates. 🙄🚀

https://blog.chromium.org/2025/06/chrome-achieves-highest-score-ever-on.html #Benchmarking #Chrome #Updates #UserExperience #TechNews #HackerNews #ngated

ChatGPT & Co für sicherheitsrelevante Einsatzfelder nutzbar machen

Die @Cyberagentur hat #Forschungsprogramm #HEGEMON zur Bewertung und Anpassung generativer #FoundationModels für sicherheitskritische Anwendungen gestartet.

Entwicklung neuer Benchmarks für KI-Modelle und Anwendung auf komplexe Aufgaben aus dem Geoinformationswesen.

Mehr Infos zur Vergabe: https://t1p.de/qcuq4

#Cyberagentur #HEGEMON #KI #Sicherheit #Benchmarking #PCP #Geoinformation #Forschung #KITransparenz

ChatGPT & Co für sicherheitsrelevante Einsatzfelder nutzbar machen

@CybAgBund startet Forschungsprogramm zu #KI-#Benchmarking & Modellanpassung für sicherheitskritische Anwendungen: #HEGEMON:

Jetzt bewerben: https://t1p.de/qcuq4

#Cybersicherheit

https://nachrichten.idw-online.de/2025/06/05/chatgpt-co-fuer-sicherheitsrelevante-einsatzfelder-nutzbar-machen

It's release time for lintspec!

This new iteration brings a new experimental parser which increases the overall performance by 2-3x and a bunch of minor fixes, especially around the highlighted source code spans in the diagnostics.

https://github.com/beeb/lintspec/releases/tag/v0.6.0

I also added continuous benchmarking with Codspeed.io

Driving Organizational Transformation Through Business Process Reengineering in Consulting https://kamyarshah.com/driving-organizational-transformation-through-business-process-reengineering-in-consulting/ #Blog #Infographics #benchmarking #bprstrategy

RedMagic 10S Pro+ Tops AnTuTu's May 2025 Android Performance Rankings

#AnTuTu #benchmarking #mobileperformance #RedMagic #smartphone

https://blazetrends.com/redmagic-10s-pro-tops-antutus-may-2025-android-performance-rankings/?fsp_sid=43308

#MachineLearning #TimeSeries #Python #DataScience #Benchmarking #NoHypeJustData

'Mastering Modern Time Series Forecasting : The Complete Guide to Statistical, Machine Learning & Deep Learning Models in Python' -> https://valeman.gumroad.com/l/MasteringModernTimeSeriesForecasting

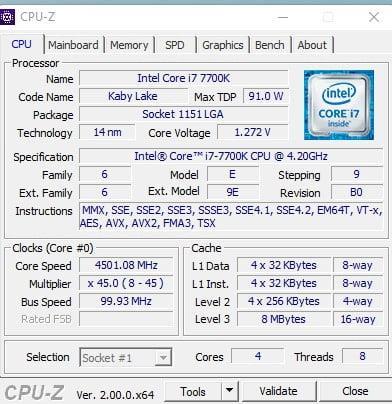

- CPU-Z 2.09 nueva versión del benchmark

- #CPUZ209 #Benchmarking #RendimientoCPU - #Software - #BenchMark #EvergreenContent #PCPerformance

- ¡La nueva versión CPU-Z 2.09 ha llegado! 🚀 Mejora la detección de hardware y corrige errores, ofreciendo un análisis más preciso para tus CPUs. Con soporte para Intel Meteor Lake y AMD Hawk Point, podrás medir el rendimiento con lo último en tecnología. 💻 ¡Sigue personaliza...

https://mastertrend.info/cpu-z-2-0-nueva-version-del-benchmark/?fsp_sid=5478

Fascinating and hilarious article about how LLM's reasoning and agency skills can be evaluated by making them run a vending machine business.

TLDR: they are pretty bad at it, especially over time. And when they do fail, they do so with panache and drama!

https://www.wacoca.com/games/1149378/ 🚀 Antec はノスタルジアと最先端技術を融合しています! #Antec #evetech #handheld ##GAMING #benchmarking #Comparison #components #evetech #Game #GameNews #games #GamingNews #GraphicsCards #Hardware #laptop #motherboard #Review #SouthAfrica #TechNews #unboxing #ゲーミング #ゲーミング最新情報 #ゲーム #ゲーム攻略 #ゲーム最新情報

Can Large Language Models Predict Parallel Code Performance?

Exploration of Cryptocurrency Mining-Specific GPUs in AI Applications: A Case Study of CMP 170HX