ContextHound v1.8.0 is out 🎉

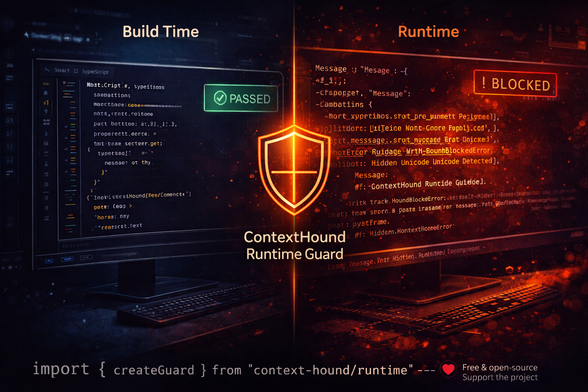

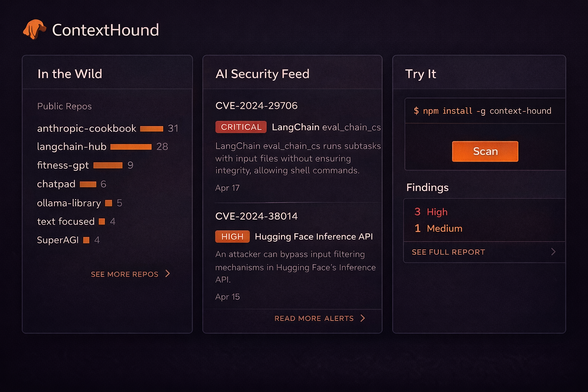

This release adds a Runtime Guard API - a lightweight wrapper that inspects your LLM calls in-process, before the request hits OpenAI or Anthropic.

Free and open-source. If this is useful to you or your team, a GitHub star or a small donation helps keep development going.

github.com/IulianVOStrut/ContextHound

#LLMSecurity #PromptInjection #CyberSecurity #OpenSource #AIRisk #AppSec #DevSecOps #GenAI #RuntimeSecurity #InfoSec #MLSecurity #ArtificialIntelligence