#ISE2025

#lighting #lightingequipment

#artificiallight #lightingdesign

#ise2025 #integratedsystemseurope

#blackandwhite #blackandwhitephotography #bnw #bnwphotography #monochrome #highcontrast #bnw_of_our_world @bnw_of_our_world

#barcelona_photographers

#fotokomunidad #fotok @fotokweb

#sonya7ii #sonyalpha #sonyphotography

#sigmalens #sigmaart #sigma2470art #sigma2470

#photography #darktableedit

The #ISE2025 lecture is over. Today our students will write the final exam. FIngers crossed. Meanwhile - as our favorite coffee place is on vacation - Cappuccino and Zupfkuchen (highly recommended) together with the @fizise TA team at Intro Café :)

#coffeechallenge #lecture #academiclife @sourisnumerique @enorouzi @GenAsefa @fiz_karlsruhe @KIT_Karlsruhe

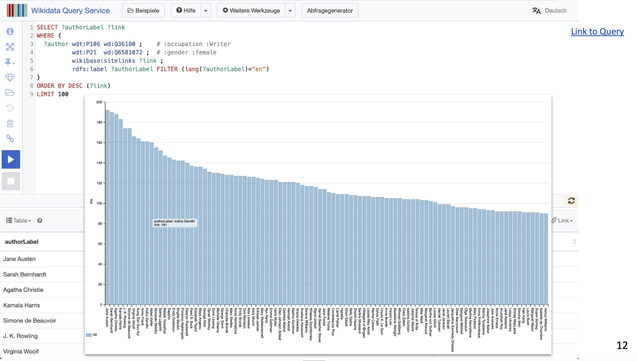

In our last #ISE2025 lecture last week, we were discussing what makes a node "important" in a knowledge graph. A simple heuristics can be borrowed from graph theory or communication theory: Degree Centrality

Interestingly, in Wikidata In-degree centrality states Jane Austen to be to most "important" female author, while Out-degree centrality claims J.K. Rowling as being more "important" ;-)

#knowledgegraphs #semanticweb #graphtheory #feminism #eyeofthebeholder @sourisnumerique @enorouzi

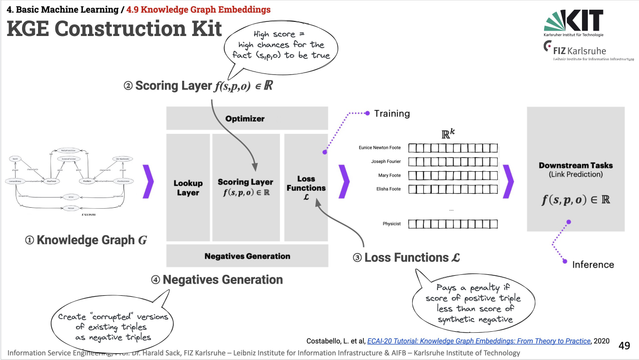

One of our final topics in the #ISE2025 lecture were Knowledge Graph Embeddings. How to vectorise KG structures while preserving their inherent semantics?

#AI #KGE #knowledgegraphs #embeddings #semanticweb #lecture @fizise @tabea @sourisnumerique @enorouzi @fiz_karlsruhe

This week's ISE 2025 lecture was focussed on artificial neural networks. In particular, we were discussing how to get rid of manual feature engineering and doing representation learning from raw data with convolutional neural networks.

#AI #ArtificialNeuralNetworks #cnn #deeplearning #machinelearning #ise2025 @fizise @fiz_karlsruhe @sarahjamielewis @enorouzi

#lighting #lightingequipment

#artificiallight #darkbackground #lamplovers #lamp #lampdesign #lightingdesign #microworlds

#ise2025 #integratedsystemseurope

#barcelona_photographers

#sonya7ii #sonyalpha #sonyphotography

#sigmalens #sigmaart #sigma2470art #sigma2470

#photography #darktableedit

In the ISE2025 lecture today, our students learned about unsupervised learning on the example of k-Means clustering. One nice hands-on example is image colour reduction based on k-means clustering, as demonstrated in a colab notebook (based on the Python DataScience Handbook by Vanderplus)

colab notebook: https://colab.research.google.com/drive/1lhdq2pynuwJKoXbspydECuWcPRw3-xxn?usp=sharing

Python DataScience Handbook: https://archive.org/details/python-data-science-handbook.pdf/mode/2up

#ise2025 #lecture @fizise @sourisnumerique @enorouzi #datascience #machinelearning #AI @KIT_Karlsruhe

#lighting #lightingequipment

#artificiallight #lamplovers #lamp #lampdesign #lightingdesign

#ise2025 #integratedsystemseurope

#hands #fog #backlit #streetphotography

#blackandwhite #blackandwhitephotography #bnw #bnwphotography #monochrome #highcontrast #bnw_of_our_world

#barcelona_photographers

#sonyalpha #sonyphotography

#sigmalens #sigmaart #sigma2470art

#photography #darktableedit

Tomorrow, we will dive deeper into ontologies with OWL, the Web Ontology Language. However, I'm doing OWL-lectures now for almost 20 years - and OWL as well as the lecture haven't changed much. So, I'm afraid I'm going to surprise/dissapoint the students tomorrow, when I will switch off the presentation and start improvising a random OWL ontology with them on the blackboard ;-)

#ise2025 #OWL #semanticweb #semweb #RDF #knowledgegraphs @fiz_karlsruhe @fizise @tabea @sourisnumerique @enorouzi

@lysander07 What I like about this view is that info is not necessarily complete. Typical real-world data. So, some queries will not show everything there is to know about a topic while a lot of info may be for other things. Though it can be extended - "pay as you go".

Some students may also be interested in expressing their research output as a #KnowledgeGraph on its own right - playing an important role in #ScholarlyCommunication

eg view: https://dokie.li/?graph=https://csarven.ca/linked-research-decentralised-web

seeAlso alt text.

In today's ISE 2025 lecture,, we will introduce SPARQL as a query language for knowledge graphs. Again, I'm trying out 'Dystopian Novels' as example knowledge graph playground. Let's see, if the students might know any of them. Wtat do you think? ;-)

#dystopia #literature #ise2025 #semanticweb #semweb #knowledgegraphs #sparql #lecture @tabea @sourisnumerique @enorouzi

Back in the lecture hall again after two exciting weeks of #ESWC2025 and #ISWS2025. This morning, we introduced our students to RDF, RDFS, RDF Inferencing, and RDF Reification.

#ise2025 #semanticweb #semweb #knowledgegraphs #rdf #reasoning #reification #lecture @fiz_karlsruhe @fizise @KIT_Karlsruhe @sourisnumerique @tabea @enorouzi

Last week, we continued our #ISE2025 lecture on distributional semantics with the introduction of neural language models (NLMs) and compared them to traditional statistical n-gram models.

Benefits of NLMs:

- Capturing Long-Range Dependencies

- Computational and Statistical Tractability

- Improved Generalisation

- Higher Accuracy

@fiz_karlsruhe @fizise @tabea @sourisnumerique @enorouzi #llms #nlp #AI #lecture

In the #ISE2025 lecture today we were introducing our students to the concept of distributional semantics as the foundation of modern large language models. Historically, Wittgenstein was one of the important figures in the Philosophy of Language stating thet "The meaning of a word is its use in the language."

#philosophy #wittgenstein #nlp #AI #llm #languagemodel #language #lecture @fiz_karlsruhe @fizise @tabea @enorouzi @sourisnumerique #AIart

Generating Shakespeare-like text with an n-gram language model is straight forward and quite simple. But, don't expect to much of it. It will not be able to recreate a lost Shakespear play for you ;-) It's merely a parrot, making up well sounding sentences out of fragments of original Shakespeare texts...

#ise2025 #lecture #nlp #llm #languagemodel @fiz_karlsruhe @fizise @tabea @enorouzi @sourisnumerique #shakespeare #generativeAI #statistics

In our #ISE2025 lecture last Wednesday, we learned how in n-gram language models via Markov assumption and maximum likelihood estimation we can predict the probability of the occurrence of a word given a specific context (i.e. n words previous in the sequence of words).

#NLP #languagemodels #lecture @fizise @tabea @enorouzi @sourisnumerique @fiz_karlsruhe @KIT_Karlsruhe

This week, we were discussing the central question Can we "predict" a word? as the basis for statistical language models in our #ISE2025 lecture. Of course, I wasx trying Shakespeare quotes to motivate the (international) students to complement the quotes with "predicted" missing words ;-)

"All the world's a stage, and all the men and women merely...."

#nlp #llms #languagemodel #Shakespeare #AIart lecture @fiz_karlsruhe @fizise @tabea @enorouzi @sourisnumerique #brushUpYourShakespeare

Last week, our students learned how to conduct a proper evaluation for an NLP experiment. To this end, we introduced a small textcorpus with sentences about Joseph Fourier, who counts as one of the discoverers of the greenhouse effect, responsible for global warming.

#ise2025 #nlp #lecture #climatechange #globalwarming #historyofscience #climate @fiz_karlsruhe @fizise @tabea @enorouzi @sourisnumerique